No Future! Cybernetics and the Genealogy of Time Governance

Ceci Nelson

The idea of “policing the future” has today become such a central feature of the cultural unconscious of western governance that we are scarcely even aware of it. Where did this idea come from? What is its genealogy? In the following article, Ceci Nelson re-reads the history of cybernetics through the entanglement of time and governance. As social turbulence and ecological collapse intensified across the 20th century, the projection of the future played an increasingly central role in the reproduction of the existing order, by allowing technocratic overseers to predict and navigate the dynamics of social fragmentation over which they themselves preside. In this context, Nelson argues, the management of time became a central feature of how power attempts to legitimize, sustain, and extend contemporary forms of governance, hegemony, and domination.

Zum Andenken an Sandra

Crisis as permanent condition

Crisis constitutes the prevailing discourse of modernity. We are repeatedly warned of the economy’s destabilization and the collapse of the financial markets; democracy and liberalism are in crisis, we’re told; the European Union is fucked by Brexit, they say; we fear the destruction of the environment; sport events are regularly drowned-out by warnings of terrorism; politicians again and again exclaim the “refugee crisis”; all the while, new emergency laws transform this state of crisis into a perpetual and normalized state of being. We get used to the increased, reactive national security. The West is submerged in crisis, as if it were living through an unending apocalypse. The prophecy of crisis implies, however, that we expect or hope for a different social condition to come afterwards, whether it be punishing destruction or redemptive happiness.

Crises are always inherently bound to visions of the future, and tend to change our perception of time: they make us believe that the future can be controlled and directed from its current position into a different direction. We hope that decisions made in the present will guarantee a better future. Yet in contrast to what seems evident, the future does not emerge from the present—the present is deduced from the future. That is to say that the present is retrospectively construed from projections about the future; the future is invented to structure the present accordingly. Still, a vision of the future is not the real future, but merely one possible or desired future. In this sense, the future is an abstraction of the world as it presently exists—it is an ideal to be realized. Even though time goes by, we never arrive at the future; we merely step from one present to the next. What does this imply for the relationship between crisis and temporality? This insight leads us to our central hypothesis: There is no future! Envisioned futures are created solely for the sake of justifying decisions made in the present, and thereby legitimizing the defense of the existing order. Political visions arise in order to rule the present, just as economic utopias are pronounced in order to make us tighten our belts. The coming collapse of the system is discussed publicly merely in order to sustain it; with reference to the urgency of measures to be taken to curtail a crisis, opposing and counter-hegemonic envisioned futures are blocked and suppressed. The crisis is declared permanent in order to maintain a stable course of events and prevent deeper and more radical changes; the repetition of such declarations justifies technocratic regulation and increased repression and surveillance—after all, it is easier and more legitimate to reduce people’s freedom to act when they are in a state of constant panic. In short, the repeatedly proclaimed states of emergency are part of a strategy to govern time.

It is for this reason that we must abandon the idea of the apocalypse. Our resistance must interrupt the flow of events conjugated by the utopias of those in power. We must pierce through the state of emergency and expose the apocalypse as the perpetual reproduction of the existing order that it is. The fact is, these discourses—crisis, the state of emergency, and our unending apocalypse—are part of the same strategy: we are not governed in crisis, we are governed by means of crisis. Only when it is declared as such does a crisis properly become a crisis; and only once a problem is understood as omnipresent and pervasive does it truly become a crisis. Not only does the repetitive cry of “emergency” desensitize people, it also obscures which of all the various ongoing catastrophes might require changes that run against the grain of existing global power structures. The situation in Syria, for example, has become a perpetuum mobile of destruction for the preservation of power, a perpetual recurrence of chaos. We must ask: who profits from crisis? Why do we live in the never-ending state of emergency? And how does this influence our perception of the future?

The genealogy of time management as a dominant mode of governance

The cultural unconscious of the West is marked by an absence of any openness to the future. The future is not taken as it comes, but is formed and planned. This planning is of course uneven, and what is lauded as a utopian ideal by some is lived as a dystopian nightmare for others. How has it come to be that humans believe themselves able to influence and determine the future? When one retraces the cultural history of the West, from the early modern era into our present one, two things stand out: first, that the concept of the future has been constitutive for the development and expansion of the Western world; second, that this concept underwent a historical shift in the modern era, such that the future now takes precedence over the present.

The idea that the future as we envision it acts recursively on the order and development of the present came into prominence in the early modern period. In 1516, Thomas More’s Utopia was published at the same time as conquerors were being driven to imagine possible future utopias through the expansion of their territorial dominion. The conquerors of “the New World” were not merely technically superior to the conquered “Indians,” but also in regards to their ability to imagine something different than that which was given, well-tried, and passed on. By conquering Central America, Cortes took the liberty to freely dispose over these peoples’ concept of time. He prepared himself for the transformation of a world, in which no calendars predicted this event. The Aztecs did not expect this attack, and ignored all warnings. This conquest was not simply a sign that an existing utopia was being pursued at all costs, but also that a transformative hegemonic culture had developed, one no longer content to adapt to the world, but which was seeking instead to change the world according to their ideals. In the 16th century, the possible and imagined thus began to dominate the given and the real. If the expansion of Occidental culture served to differentiate the Old and the New, this was also because the climate of colonized regions near the equator rhythmically deviated from the four seasons that had structured human activities on the Old Continent in Europe. As the cyclical began to lose its power, the future was no longer seen as an act of providence. Western culture twisted free of the recurrence of events, as humans began to conceptualize themselves as the masters of their own future.

Thereafter, our understanding of time was repeatedly re-conceptualized and “corrected.” For example, the concept “before Christ” became generally accepted only in the 18th century. Time began to lose its fixed starting point, and now seemed to extend backwards and forwards into eternity. With time understood as unified and standardized, the future was now transformed into an open and unlimited continuum. The next important break in time was the concept of the modern era itself: by focusing on the contemporary, this term creates a clear line demarcating our “enlightened” present from the “backwardness” of the past. As the linear notion of time came to the fore, the cyclical understanding of time was rendered increasingly obsolete. Technological innovations enabled time to be measured and structured differently, as happened when factory lights suppressed the dichotomy between night and day. Because they usurp the control over time from the forces of nature, technology always interferes in the current of time.

As a consequence of industrialization, mechanization, colonial expansion and the fight for world domination, 19th century Western culture was marked by an atmosphere of constant transformation, which would eventually sediment into a deep conviction that human progress was something akin to a natural law. The future was no longer understood as a cyclical iteration of what had already been, but rather as a temporal object of intention and desire, in which the promises of progress could be realized. This view was held, among others, by Karl Marx. Classical modernity equated time with chronology, as if a single path connected the past to the present, and would lead us into the future. Enlightenment rationality propagated the illusion of a “better society” that could be reached by following this linear path. The transformation of the fabric of time was an intervention that made the future synonymous with progress. Modernization became a normative force—or as the futurist and advocate of postindustrial society Daniel Bell explains, “one of the hallmarks of modernity is the awareness of change, and the struggle to control its direction and pace.”

In order to control the problems resulting from this constant impulse towards innovation and perpetual competition, a new science was developed: that of social engineering. Social engineering was designed to stabilize social relationships, while warding-off opposition and conflicts between the poor and the rich. The aim was to integrate and “normalize” all agents through behavioral training and punishment. Social engineering was closely related to the Taylorism that emerged out of the effort to theorize the rationalization of the work processes, and which was associated with the principle of “saving time” by fragmenting, measuring-out, and optimizing actions, and which continues to this day. Following the temporal needs of production, technology is used to speed up both work processes and time in order to produce greater profits for capitalists. Michael Ende published a book in 1973 on the consequences of this historical development, entitled Momo, or the strange story of the time-thieves and the child who brought the stolen time back to the people. Its story depicts how humans are tricked into believing that time can be gained by increasing output efficiency. Ende describes how a bureaucratic apparatus is created to manage society’s organization of time. Yet because this apparatus always demands more time from the people in order to save even more, the level of stress continuously increases. The people don’t even notice that the time they supposedly saved or originally intended to gain had evaporated into thin air. The moral of the story is simple: the management of time is a method to rule over people.

The birth of nihilism originates out of the death of the past. With World War II, the West lost its historical awareness. A new philosophy was developed out of the ashes which affirmed that “an aftermath is a beginning, not an ending.” Since it is the tendency of war to destroy the economy while revolutionizing technology, their conclusion is often accompanied by an acceleration of time. The chaos of 1945 was seen by many as a moment of opportunity, and provoked a glut of new concepts promising to manage policy by simplification and structuring. The theoretical frameworks of Game Theory, Cybernetics, Rational Choice Theory, Operations Research and Systems Analysis were tested in wartime situations before later being applied to civil society. The promise offered by these concepts was related to the dominant Western utopia in the postwar period: the desire to build a new society, to regulate and control humanity, and to balance global powers by transforming social institutions and the world into a rational and efficient machine. What unified these frameworks was the attempt both to rationalize politics and to control, plan and shape the future through decisions in the present.

World War II led to the greatest crisis of governance in the 20th century. It fueled the West’s deep conviction that a desired, envisioned future can be brought about through decisions and actions in the present. A change within the meaning of time was central to the installation of this idea: the relation between present and future was inverted. For the future to be governed, its openness is reduced to a scenario that can be controlled in the here and now. This process is called defuturization, which forms the dominant conceptualization of time in the present era. To grasp how defuturization was created, we first analyze the reciprocal relationship between prediction and course correction. After doing so, we consider why the future is so often conceptualized as a threat.

“Defuturization,” or the relevance of the present

During the first industrial revolution, amidst the rampant enthusiasm for progress, public focus was drawn away from the present. Work was said to serve the flourishing of civilization in a far, far away future somewhere beyond the grey horizon. The bourgeoisie’s focus on progress and modernity glorified the future, and the present was seen as a transient and uninteresting threshold. After belief in the final triumph of reason was abandoned following World War I, and considering the possibility of the world’s complete destruction by nuclear war after World War II, the originally positive connotations of the future were destroyed. What became of the future after this disenchantment? By facing the fact that nuclear war could gravely affect society for generations, and possibly even wipe out the existence of humans completely, the meaning of time began to change. Gradually, the sense of time began to differ from that of classical modernity, when people in the West had been governed by hope. Before Hiroshima, the future could be seen as a positive horizon towards which humanity was progressing. With the advent of nuclear wars and their destructive potential, it became absolutely necessary to rule out certain outcomes from future possibilities. As Günther Anders notes, this human-made scenario risked turning the future into a game of Russian roulette, and “because the effects of what we do today will remain, we already reach the future; the future is already present in a pragmatic sense.”

With the darkening nuclear fate of humankind on the horizon, the future receded behind a veil of uncertainty, leaving the present to take on an increasingly crucial relevance. In place of the Enlightenment ideal of the perfectibility of human beings, the sciences now concern themselves with the attempt to control a menacing and essentially open-ended future. Despite the evident failures of the linear conception of time, rather than calling our teleological beliefs into question, postwar epistemology spliced and shortened the duration of time into apparently measurable steps. In order to plan and decide, diversity and complexity had to be reduced to factors that could be controlled here and now; the focus consequently shifted away from reason, towards regulation and feedback control. The war created new opportunities for those aspiring to actively reshape society. Scientists and engineers volunteered to help by reducing uncertainties, effectively policing the future through a technique the German systems theorist Niklas Luhmann would refer to as defuturization.

Since Antiquity, Western philosophy and theology had confronted the limits of human logic to account for possible future events. According to Luhmann’s reading, although modern society had become inordinately complex, it was still possible to reduce all possible futures through a procedure of defuturization. Since the future is characterized by a lack of knowledge and by contingency, defuturization can systematically decrease this openness by applying a binary code; thus, a future event becomes seen as either possible or not. As Luhmann concluded, “the theory of time has to transform its vague idea that ‘everything is possible in the long run’, one based on a chronological conception of time, into a concept of temporal structures with limited possibilities of change.” The perspective of defuturization relies neither on knowledge based in experience, wherein we trust in the recurrence of events, nor on an experiment-based approach that constructs universal “natural” laws from observation—both of which had constituted the methodological foundations of epistemology between the 17th and 19th centuries. In the 20th century, prognosis and forecasting instead crept to the forefront, approaches that were made possible by advances in mathematics and statistics.

As the priority shifted to predicting an uncertain future, a new group of people began to take charge. Authority at the drawing board suddenly fell to young scientists and managers rather than to aging war veterans. A wealth of past experience was suddenly worth less than the ability to calculate the probable future. The young technocrats understood forecasting as a scientific based method of policy advising, and claimed that neither experience nor the conventional methods could predict the consequences of political decisions. The very definition of cybernetics, for example, combines a complex relationship to temporality with an obsessive interest in prediction. Instead of thinking of time as a fixed form or a priori category, as Immanuel Kant had understood it, cybernetics instead rationalizes it down to its central unit of measurement. Norbert Wiener, who coined the term, had worked on the analysis of times-series while developing a mathematical theory of prediction that was utilized during World War II for the construction of an anti-aircraft predictor. Wiener calculated the future positions of airplanes with the help of a statistical correlation between a row of time-points. With this calculation, the military should be able to determine when and where one must shoot in order to hit a specific enemy bomber. In this sense, cybernetics was developed as a method of steering present actions towards a desired result in the future. Prognosis is not to be confused with the prediction of possibilities: the aim of cybernetics is to define targets and delineate the course that leads to them in a process of feedback.

Prognosis and course correction stand in a reciprocal relationship, perpetually and incrementally adjusted as one moves forward step-by-step. Norbert Wiener based his principles on a theory that actually called the linear conceptualization of time into question, namely, the theory of relativity. Postmodern intellectuals interpreted this theory as a proof that everything is relative and everything is possible. Without a doubt, the theory of relativity led to the destabilization of a universal and homogeneous understanding of time. Far more important, however, was how the discovery of time’s relativity spurred the ambition to control, regulate and shape it.

In the 1960s, cybernetician Hasan Özbekhan remarked that no preceding era had ever been so obsessed with the future. That there exists a large number of different possible futures to choose from became a central tenet of the fields that would comprise the futurology (also known as future studies) that became so extremely important at the time. The devastation wrought by the war gave rise to the management of the future through rational, scientific methods, marking a shift toward the paradigm of defuturization. The logic behind these methods was by no means peaceful; futurology and the nuclear arms race share common roots. John von Neumann invented the equilibrium strategy of mutually-assured destruction based on Game Theory, and Herman Kahn wrote essays on alternative scenarios of nuclear war, defending war with the same self-evident rhetoric that some of his contemporaries defended free love.

Futurology’s primary achievement was the creation of technological prognosis. The results were intended to overcome a division in public discourse: positive visions of the future glorified technics, while negative visions warned of a takeover by cyborgs and artificial intelligence. To overcome wild speculations, futurists interviewed experts, asking them when particular inventions or milestones would be possible, for example, living on the moon. Their failed forecasts are a testimony to the limits of the ability to reduce an open future through defuturization. Claus Koch (a contemporary of Herman Kahn, John von Neumann, and Hasan Özbekhan) put it well: in futurology’s conclusions, only the silhouettes of the present appear.

The actual present has proven these prognoses to be false: by the year 2000 there were no artificial moons to give us daylight, and no interplanetary space flights, even though both were predicted. The futurists predicted neither the energy crises nor the environmentalist movement; they didn’t envisage the collapse of the Soviet Union, couldn’t forecast the relevance of computers, misjudged population growth, and neither of the nuclear disasters in Chernobyl nor Fukushima show up in their reports. You don’t have to be a rocket scientist to know how absurd it is that the conceptual ideas of prediction were increasingly integrated into the political sphere, or that prognosis became a widely established practice. What system theorist, government advisor, and OECD consultant Erich Jantsch said in the 1960s remains essentially true: “technological forecasting and planning have a natural and inherent tendency toward fuller integration, which, in the 1970s, may possibly result in the disappearance of forecasting as a distinguishable discipline.” The explanation for this disappearance is as follows: the futurists were employed as planning strategists, and military think tanks provided the methodological knowledge needed to carry out prediction and planning. After World War II, the military apparatus was diminished and the futurists became the employers. Some went back to university, or remained in think tanks where they fine-tuned their methods of prognosis. A considerable portion of them went into government, where academic expertise became a highly respected value, while others went on to manage large corporations like Ford and General Motors. Robert McNamara did both, well before he became head of the World Bank. This is how the early scientific attempts to control nuclear risk by calculating the future eventually became a major part of our predominant power structure.

In sum, time developed a new meaning by the middle of the 20th century. As fear of an incalculable future deepened, provoking a collapse of the modern era’s eschatological destiny, the cybernetic idea, which posits that control can be retroactively adjusted after a goal is set, brought with it the practice of structuring the future into manageable segments in order to gradually correct deviations. Yet the use of formal models is by no means a neutral way of depicting the future. Simplifications are not accurate representations, but are undeniably marked by political assumptions and can be subject to manipulation. Statistics play a key role within strategies aiming to govern the future, since they offer a framework for legitimating decisions in the present. In their hopes of stabilizing power structures and the economy, rulers rely on such methods of calculation to eliminate undesirable possible futures. The goals they set for the future operate like a feedback loop, meaning that in the final analysis, political prognoses are not deemed true because they are correct, but rather because massive resources are deployed in the service of making them correct. Thus, aren’t prognoses merely rhetorically-clad formulations of a pre-existing goal designed to steer politics toward a desired future?

Extending the present with “limits”

Filled with excitement for technology, the 1960s were characterized by reciprocal accusations of evil between the East and the West. Newspapers, science and sci-fi pushed the image of a calculable future: North America worked on a space station from which it hoped to survey the entire world, while the Soviet Union let a dog fly around the planet to test whether or not it was possible to survive in the extraterrestrial universe. Such widespread optimism was based on benefits like regular wage increases, full employment, on innovations like lasers, computers and antibiotics, private indications of happiness such as the purchase of cars and refrigerators, and on achievements like the automation of factories. The positive worldview was thus based on the understanding that progress and prosperity would be beneficial to all, that anyone could start as a dishwasher and end up a millionaire. However, by end of the decade, the satisfaction and economic security of the people had turned to recalcitrance, as people no longer accepted everything the government had to offer them, nor their explanation for the past; the revolts had begun.

In 1968, the Organization for Economic Co-operation and Development (OECD) held a debate on long-term changes affecting political and economic stability. The debate took place against a backdrop of a shift in popular sentiment that had become increasingly skeptical of unlimited economic growth, job security and the welfare state. The modern optimism regarding progress had long since begun to break down. The conception of time was now once again drastically transformed: as the future lost much of its appeal, the present was further extended. The nation states lost authority and yielded to the governance of supranational and globally-networked technocratic corporations.

In our epoch, governance no longer lies in the government: there is no more centralized power. Instead, domination has spread itself out, the world has consolidated itself into a “network society” that integrates every last inch of the planet and tethers the peripheries. Today, the role of the state is solely to support and defend this “network.” The fear of instability and the wish to plan the future provoked by World War II led to the formation of supranational organizations like NATO, UNO, the IMF, World Bank and OECD, who sought to reconstruct the Western order and establish a counterbalance to the Soviet Union. However, these institutions only became influential in a real sense after the political and economic turmoil of the 1970s. Since then, domination has not only globalized, it has also gradually hybridized and became informal: the votes of experts were a new way for decision-makers to justify their actions. The Club of Rome, the World Economic Forum, or the Mont Pèlerin Society all strive to obtain a panoptic view of the economy and politics that would allow their stakeholders to take a proactive role in governing the globe. The origin of their power lies in the notion that one needs elite thinkers to oversee the minions.

With the empowerment of this decentralized infrastructure the question of “democratic” legitimacy arose and became crucial, but could be left unresolved due to the priority of arguments concerning urgent practical needs. The end of monetary fixation initiated by the Breton Woods agreement combined with the oil crisis that same year represented the beginning of a new epoch, a change of circumstances: firstly, a challenge to Western and Soviet dominance arose in the middle east; and secondly, since public attention had shifted away from technological innovation towards raw materials, the oil crisis became associated with the beginning of the debate on resource scarcity. Here we find one more illustration of how crisis is used as a mechanism to discipline the population: humans were declared to be consumers responsible for helping to regulate the crisis. Saving energy became everybody’s duty, even if the fact that resource consumption still increases represented an obvious contradiction.

What effects did this political and economic instability—as well as the changes in the mode of governance it provoked—have on the conception of time? The criticism of the idea of linearity represents a turning point: the idea of growth had been questioned, and the need for limits had been put forth; the peril presented by the possibility of a future troubled by resource scarcity led to an extension of the present. The future was over.

Consequently, defuturization meant drawing out, protracting, and elongating the present in order to ward off the apocalypse. Western governance had begun to fear its own destruction. While the impact of technologically oriented utopias had made themselves felt by the middle of the century, by the onset of the 1970s we have lived in a continual revival of the apocalypse. In the Christian tradition the apocalypse is the end of time. Yet as a prediction that never takes place, it serves solely as an instrument to discipline the mind. Today, its function has been replaced by an endless governmental reproduction of disaster scenarios. In order to keep the apocalypse at bay, the present is frozen in a self-preserving, self-regulating system.

But a system is a form and not a conception of time. A system is composed of individual components that are hardly negligible on their own, components that interact with each other. A system stabilizes itself through the reaction between its parts. To become more powerful, it must integrate more components, control the infrastructure and the information flow, and reduce the reaction time between its parts. These decentralized and informal modes of governance were theoretically anticipated by a second generation of cyberneticians: Humberto Maturana and Heinz von Foerster. These theorists drew upon the idea of “dynamic systems”. We are familiar with the cliché that, because “everything is connected and interacts with everything else”, a butterfly flapping its wings in China might cause a hurricane in the United States. The idea is that dynamic systems are determined by rules, however their chronological development is not foreseeable, since they have neither end nor beginning. As a steering or “piloting” paradigm, cybernetics was flexible enough to integrate the system approach. Cybernetics theorizes systems as fundamentally self-preservative or autopoietic. An autopoietic system is not based on a linearity between past, present, and future; rather, the process of self-regulation lasts from the moment the system is unbalanced to the moment it finds equilibrium. John von Neumann illustrates the cybernetic interpretation of the problem concerning nonlinear systems by the maxim, “all stable processes we shall predict; all unstable processes we shall control.” The theory of self-regulated systems no longer aims to determine the course of action and eliminate possibilities for change. Instead, these possibilities are included in the system itself, and only technocratic authorities on a higher level are able (or “legitimized”) to observe and regulate them.

The relation between this reformation of cybernetics and the shift in our perspective on time becomes apparent from the jargon of the Club of Rome, a futurist association founded in 1968. Its founding members, who were at the same time officials of the OECD, were frustrated by the long-winded debates required by government bureaucracies. Presciently anticipating the post-growth debates to come in the 1970s, the Club of Rome pushed for an immediate change of course in global politics. Its leading visionary, Hasan Özbekhan, argued that rather than being left to take shape randomly, the world’s development should be planned rationally. Institutions should be created which would take over the control function for all efforts aimed at the future, which he called the “look-out.” These superordinate and farseeing institutions would not only define what possible futures there are, they would also propose paths towards their realization. Özbekhan criticized the fact that the existing international organizations neither met these requirements, nor did they have the political legitimacy to make the necessary decisions. He proposed a network of independent experts who would have the rights and freedoms to forge links and to enter into relations with all sectors of society. Seen in retrospect, Özbekhan’s proposals preceded those of the Club of Rome, which aimed to become a private body in the aforementioned sense—namely, a loose alliance of futurists, but with a different interpretation of time than the futurists before: they extended the present.

The Club of Rome’s publication, “Limits to Growth”, adapted Özbekhan’s idea of a world in balance. Here again, mathematical simulations legitimize these forecasts. Even though their rhetoric originated in the same fear of a contingent future that had to be controlled, the Club of Rome reconceptualized the idea of defuturization: in 1972, “Limits” implied a transition from growth to equilibrium, the latter being understood as a state of balance and equality between opposing forces. The report recommends that this equilibrium be achieved by balancing positive and negative feedback loops. In their conclusion, and in accord with the emphasis on the timeless state of self-regulative autopoiesis, the Club of Rome suggested “a controlled, orderly transition from growth to global equilibrium.” The prophesied transition never happened—nevertheless, the futurists’ ambitions to control the future proved integral to forging the idea of self-regulating neoliberal ideology informing the regime of governance under which we currently exist. The long recession in the 1970s made the authority of a specialized class of experts socially acceptable, as well as the previously unpopular idea of an autopoietic system which “we’re all a part of”. The permanent revival of “the crisis” in which we are submerged today has its origins in the 1970s: ever since this fearful turn, we have found ourselves in a permanent and self-preserving crisis. Neoliberalism not only generates itself from out of crisis, it grows alongside it. Crisis is fundamental to the self-preservation of capitalism. Capitalism as a mode of governance is a historical given, and that’s why we call it “a system”.

Where are we now?

The collapse of the Eastern Bloc in 1990 was meant to be the end of history, the obsolescence of all utopian thinking. All hope was placed in the triumph of capitalism. If we say “no future”, this should be understood as a refusal of both this restriction and of defuturization—we do not want to give control over the past, present, and future to experts and those in power. In our era of perpetual apocalypse, right wing visionaries call crisis “a chance to enforce new solutions”—clearly a fascist statement. What else do these backlashes signify? How can we explain the recent return to the nation-state, as evidenced by “Brexit” no less than the Covid pandemic, which signal an obvious crisis in the conception of supranational governance? Instead of accepting this reality as the consequence of our contemporary global politics, we should ask ourselves how the frameworks of crisis can be broken down entirely. How can we oppose the current mode of crisis governance? For this, it is not sufficient to simply announce, as an abstract imperative, the need to discard Western conceptions of time, whether by defining time in a different way, or else nihilistically refusing all vision altogether—neither would have the slightest political effect. Instead, we have no choice but to develop a strategy, one capable of generating new pluralist forms of life and discourses, opening other possible futures. What do we plan to do with the “now-time” that remains? Will we extricate ourselves from our current repressive utopia, and develop an altogether different and emancipatory future?

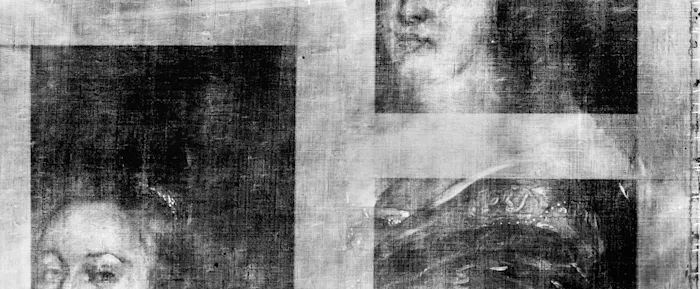

Images: David Maisel